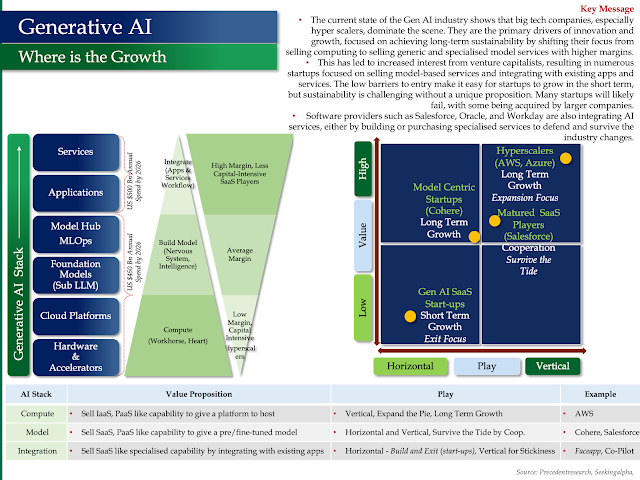

Generative AI - Where is The Growth?

- The current state of the Gen AI industry shows that big tech companies, especially hyper scalers, dominate the scene. They are the primary drivers of innovation and growth, focused on achieving long-term sustainability by shifting their focus from selling computing to selling generic and specialised model services with higher margins.

- This has led to increased interest from venture capitalists, resulting in numerous startups focused on selling model-based services and integrating with existing apps and services. The low barriers to entry make it easy for startups to grow in the short term, but sustainability is challenging without a unique proposition. Many startups will likely fail, with some being acquired by larger companies.

- Software providers such as Salesforce, Oracle, and Workday are also integrating AI services, either by building or purchasing specialised services to defend and survive the industry changes.

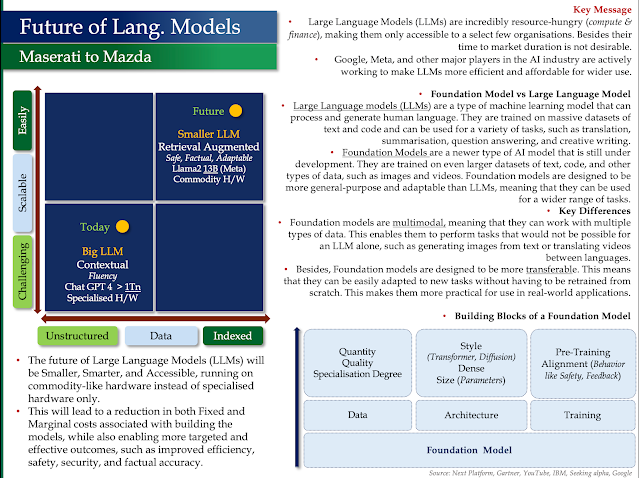

Future of Language Models

- Large Language Models (LLMs) are incredibly resource-hungry (compute & finance), making them only accessible to a select few organisations. Besides their time to market duration is not desirable.

- Google, Meta, and other major players in the AI industry are actively working to make LLMs more efficient and affordable for wider

Foundation Model vs Large Language Model

Large Language models (LLMs) are a type of machine learning model that can process and generate human language. They are trained on massive datasets of text and code and can be used for a variety of tasks, such as translation, summarisation, question answering, and creative writing.

Foundation Models are a newer type of AI model that is still under development. They are trained on even larger datasets of text, code, and other types of data, such as images and videos. Foundation models are designed to be more general-purpose and adaptable than LLMs, meaning that they can be used for a wider range of tasks.

Key Differences

Foundation models are multimodal, meaning that they can work with multiple types of data. This enables them to perform tasks that would not be possible for an LLM alone, such as generating images from text or translating videos between languages.

Besides, Foundation models are designed to be more transferable. This means that they can be easily adapted to new tasks without having to be retrained from scratch. This makes them more practical for use in real-world applications.

My other posts on Generative AI and Strategic Analysis of Key Players

- AI Value Chain

- Amazon the King of Retail -WhyAWS is the Crown Jewel

- Microsoft the King of AI in Software, Salesforce under the AI Cloud

- Tesla - It's not a Car, It's an AI Device on Wheels

- Google the King of Search - What the Future Beholds in the AI World

- Nvidia Godfather of AI - Why the Market is Bullish

- IBM - How it Lost Its Way

- Generative AI can transform Telecoms, Energy and Utilities

No comments:

Post a Comment